Low-Latency Spiking Neural Networks

QFFS quantization framework and dynamic confidence decoding for ultra-low-latency SNN inference on ImageNet.

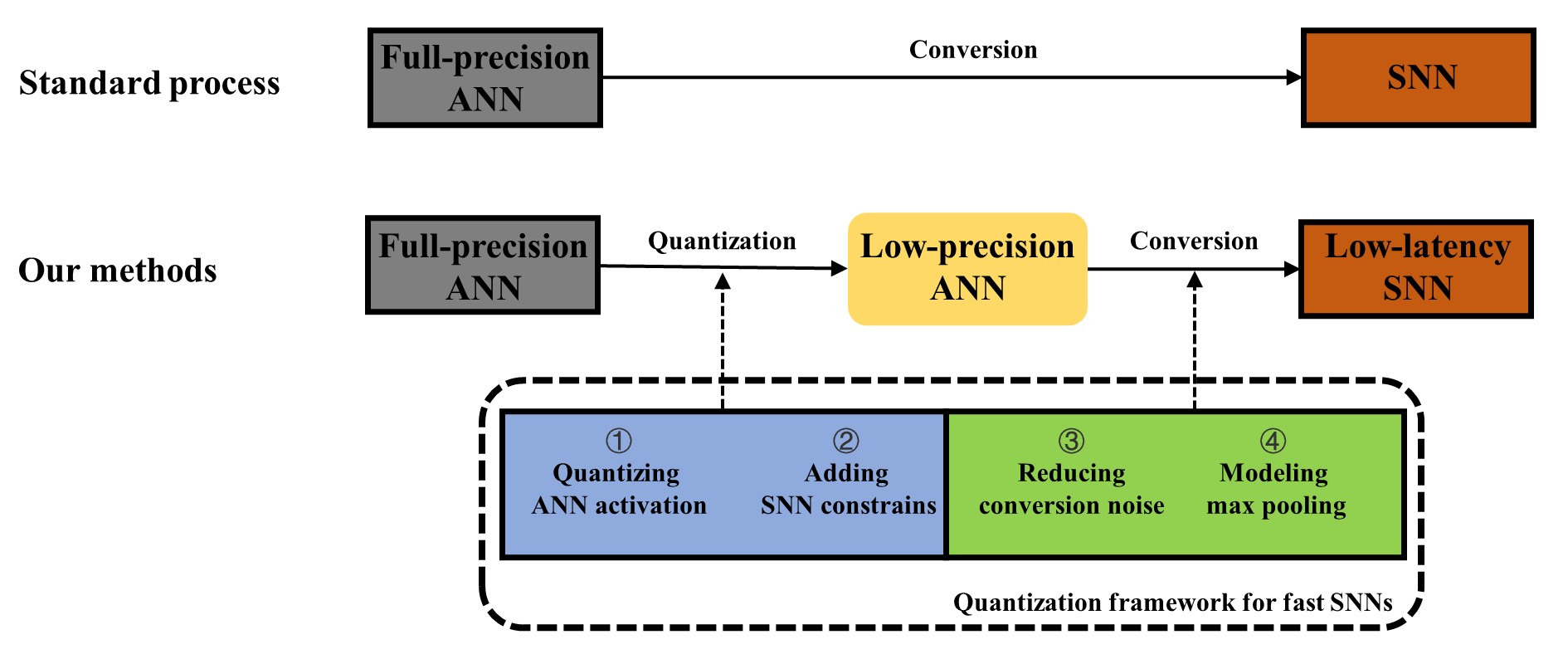

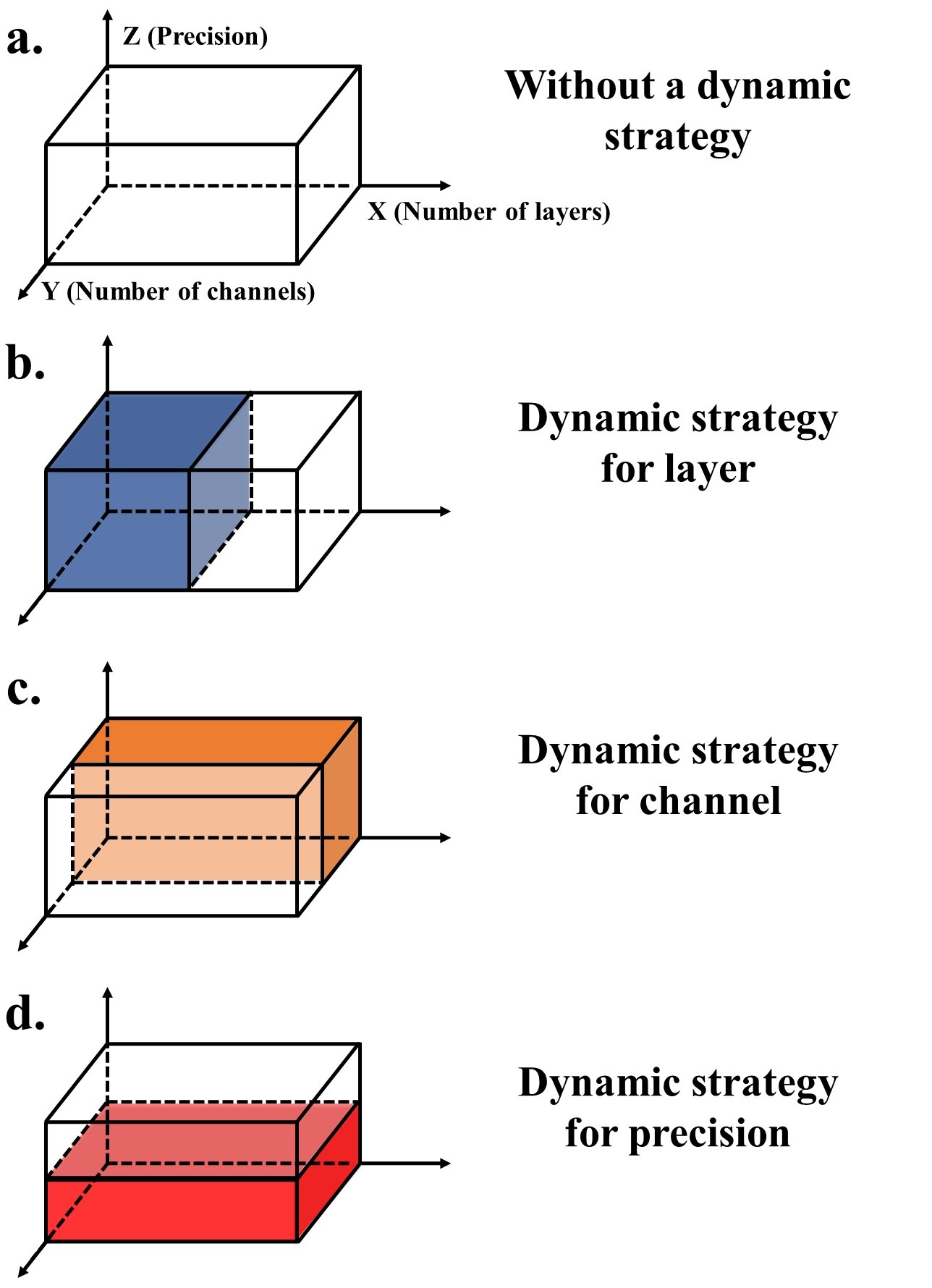

Quantization Framework for Fast SNNs (QFFS)

Compresses ANN activation precision to 2-bit and suppresses “occasional noise” in SNNs. First to achieve competitive ImageNet accuracy at fewer than 6 time steps via ANN-to-SNN conversion.

Dynamic Confidence

A runtime optimization that decodes confidence from SNN spike outputs and terminates inference early when confident.

Key Results:

- Reduces latency by 26-36% on ImageNet with no accuracy loss

- VGG-16 QFFS: 72.52% accuracy, reduced from 4 to 2.86 avg. timesteps (29% saving)

- ResNet-50 QFFS: 73.17% accuracy, reduced from 6 to 4.42 avg. timesteps (26% saving)

Publications:

- Li C, Ma L, Furber S. Quantization Framework for Fast Spiking Neural Networks. Frontiers in Neuroscience, 2022.

- Li C, Jones E, Furber S. Unleashing the Potential of Spiking Neural Networks with Dynamic Confidence. ICCV 2023.